How it Works

Fleet Function is a new serverless technology built on top of Node.js, allowing you to continue to develop as if you were in the Node.js environment on your own infrastructure without worrying about it.

We built the Fleet on top of Node.js to allow developers to continue developing their application without having to learn new APIs or attach to a specific platform.

Fleet runs in a Node.js 14.x environment but there are some API limitations that in the context of functions may not make much sense and are limited for security purposes, see the limitation documentation to learn more.

To understand more about how Fleet's technology scales its functions and performs safely and fastest, let's unzip some differences and help you understand.

Runtime APIs

Learn more about the Node.js APIs available in Fleet functions.

Isolates

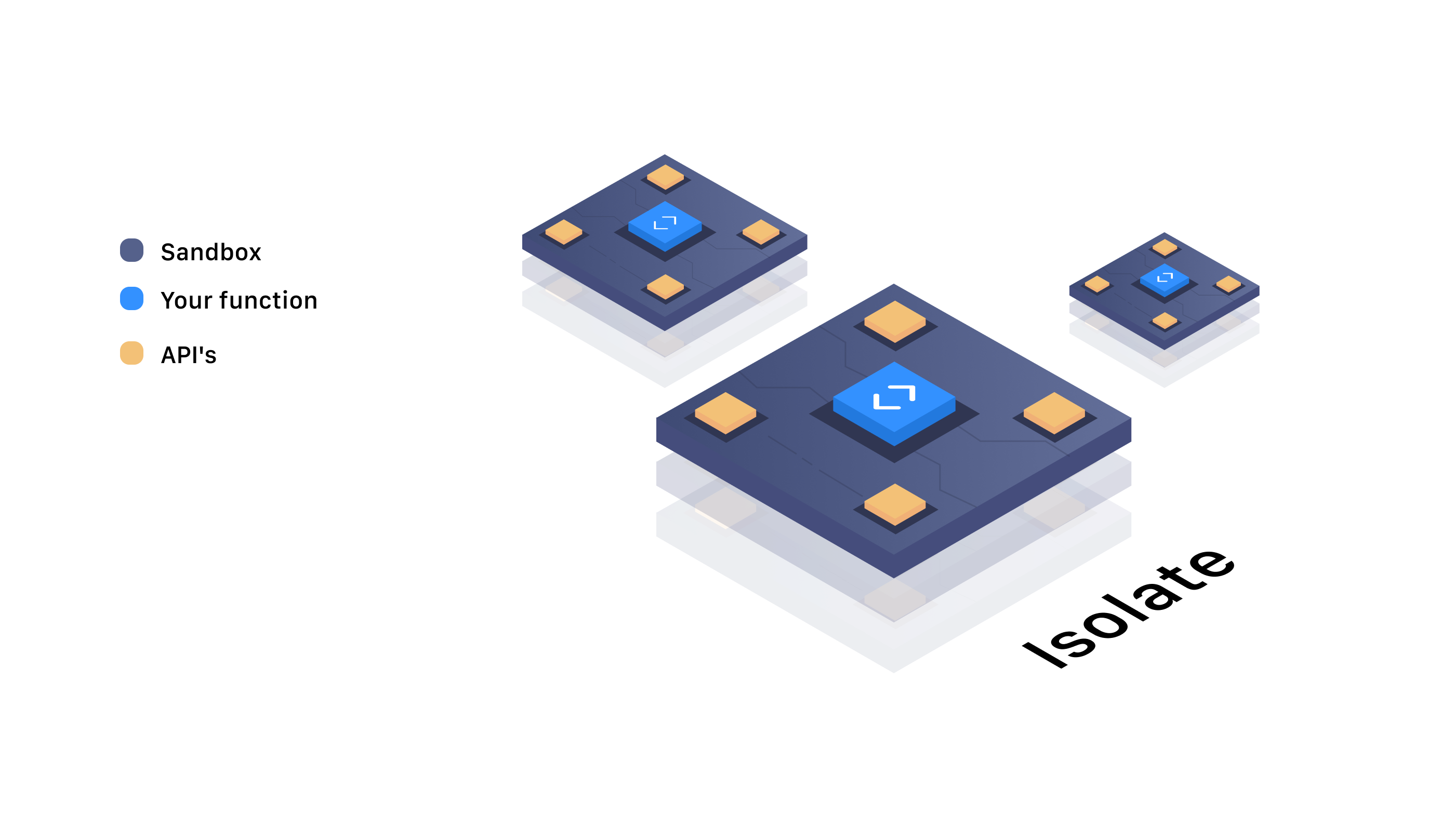

Node.js uses the V8 engine under the hood to interpret and execute JavaScript code. The V8 orchestrates isolates that in turn are performed in a safe environment.

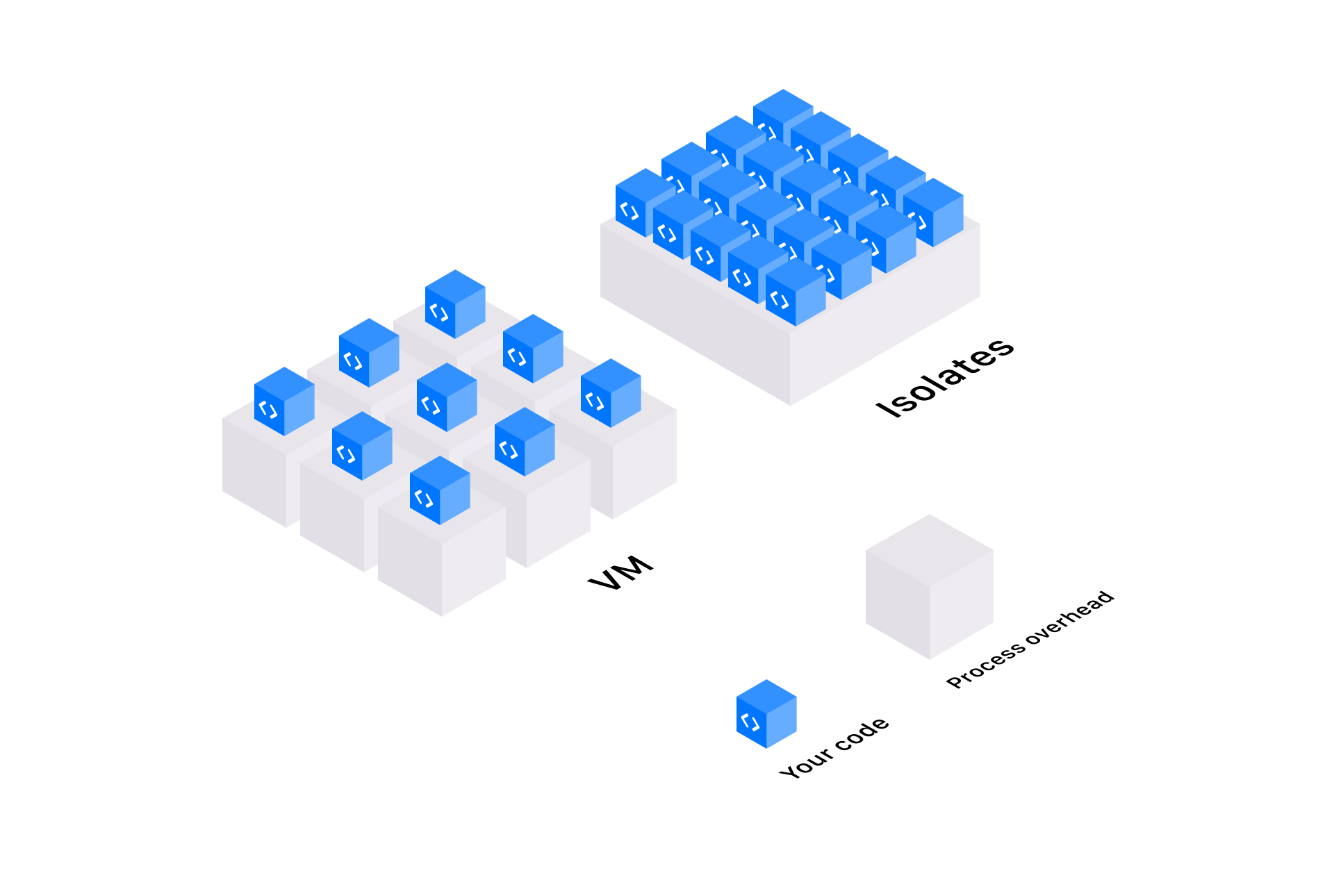

There are some differences for a Node.js runtime environment in Fleet for other Serverless technologies, unlike other serveless providers that use processes in containers, each running an instance of a language runtime, Fleet uses the overhead of a running Node.js once at the beginning of a container. The processes are able to execute scripts isolated from the others, creating an isolate for each function call or taking advantage of the same function to execute the call (learn more about this below). Any isolate can start about a hundred times faster than a Node process in a container or virtual machine. Notably, at startup, isolates consume an order of magnitude less memory.

An isolate has its own scope, in Fleet functions they are executed in isolation, memory, CPU, context... each function has access to available Node.js APIs to develop its typical Node.js function.

In Fleet, functions can be run for a short or long time (see the limit documentation to learn more) but a function can be dumped for some reasons.

- A suspicious script

- Individual resource limits

Security

Learn more about how Fleet protects your code.

Compute Per Request and Compute time

The Fleet functions are perfect to be executed for short periods or long periods with a limit of 5 minutes. The functions are calculated by the number of requests and the time of execution of a function.

The execution time is calculated every 1 ms, since there may be functions that can be executed in less than 1 ms.

Even though your function can handle asynchronous requests, the number of requests is still calculated.

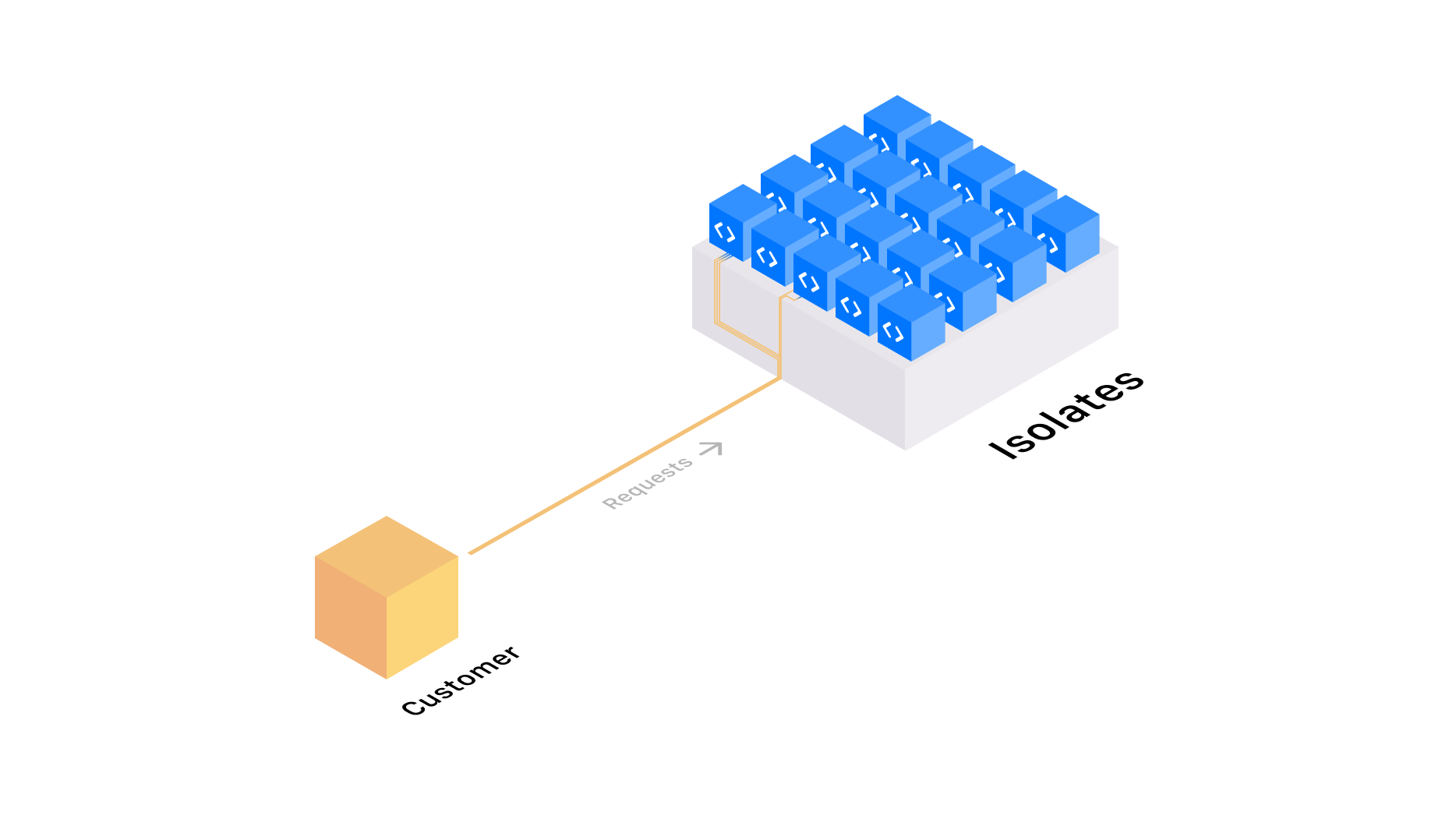

Execution and Provisioning

In a serverless environment as requests arrive they are provisioned and scaled horizontally, instantiating new execution times to handle each call. A runtime never handles more than one call at the same time on other platforms, unlike Fleet Function it allows you to configure that your function can handle more than one function at the same time.

Handling more than one call in the same function comes as close to a typical Node.js application, using Node.js async. This brings some challenges, requiring you not to block the event loop to prevent its functions from being overloaded, configure your function with the number of calls it can process in async mode to avoid slow requests and blocking event loop. You can choose to take advantage of this, if you know Node.js well and know how to handle the event loop, well-written code may not suffer from losses in response time and increasing provisioning limits.

Handling more than one call in your role allows you to handle more competing requests. Naturally, you will be able to reach higher levels of competing requests than on other serverless platforms.

Function Scaling

Get a deeper understanding of how Fleet scaling works and how to leverage it.